Bridging Structure and Emotion: A Generative Framework for Accessible and Expressive Knit Design

DOI:

https://doi.org/10.64509/jdi.11.58Keywords:

Knit Pattern Generation, Generative AI in Fashion, LoRA Fine-Tuning, Semantic Conditioning, AI-Driven Design ToolAbstract

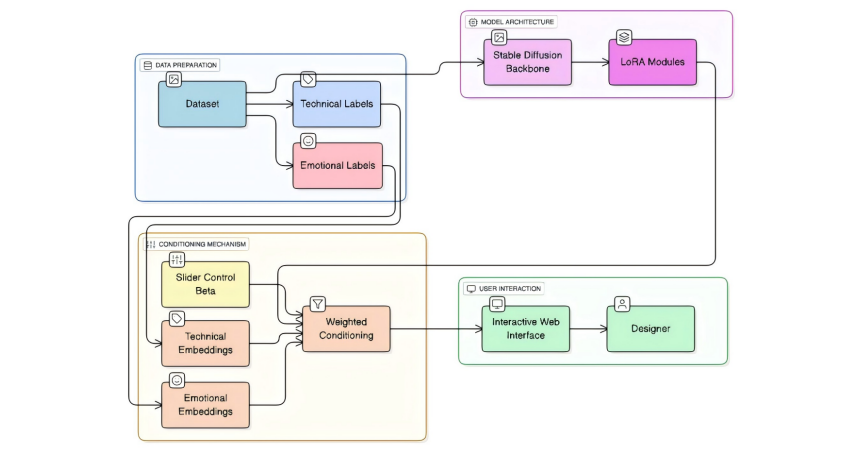

Knit pattern design is challenging, requiring both technical skill and creative vision. We introduce an accessible generative framework using Stable Diffusion fine-tuned with LoRA and an interactive web interface. Our system utilizes a dual-label dataset (over 3000 images) annotated with both technical attributes (stitch type, complexity) and semantic/emotional descriptors (e.g., cozy, elegant) to ensure outputs are structurally coherent and stylistically diverse. The LoRA-tuned model exhibits strong domain specialization, producing clearer stitch definition and fewer non-knitting artifacts than the baseline. We develop an interactive platform featuring an emotion-technical balance slider. This framework positions generative AI as a creative partner that lowers technical barriers and expands the expressive possibilities of textile design.

Downloads

References

[1] Kapllani, L., Amanatides, C., Dion, G., Shapiro, V., Breen, D.E.: TopoKnit: A process-oriented representation for modeling the topology of yarns in weft-knitted textiles. arXiv preprint arXiv:2101.04560 (2021). https://doi.org/10.48550/arXiv.2101.04560

[2] Underwood, J.: The design of 3D shape knitted preforms. PhD thesis, RMIT University (2009). https://core.ac.uk/download/pdf/15614587.pdf

[3] Kaspar, A., Oh, T.H., Makatura, L., Kellnhofer, P., Aslarus, J., Matusik, W.: Neural inverse knitting: From images to manufacturing instructions. arXiv preprint arXiv:1902.02752 (2019). https://doi.org/10.48550/arXiv.1902.02752

[4] Scheidt, F., Ou, J., Ishii, H., Meisen, T.: deepKnit: Learning-based generation of machine knitting code. Procedia Manufacturing 51, 485–492 (2020). https://doi.org/10.1016/j.promfg.2020.10.068

[5] Sheng, H., Cai, S., Zheng, X., Lau, M.: Knitting robots: A deep learning approach for reverse-engineering fabric patterns. Electronics 14(8), 1605 (2025). https://doi.org/10.3390/electronics14081605

[6] Zheng, X., Sheng, H., Cai, S., Lau, M.C., Zhao, K.: Automated knitting instruction generation from fabric images using deep learning. In 2024 3rd International Conference on Automation, Robotics and Computer Engineering (ICARCE), pp. 197–201 (2024). https://doi.org/10.1109/ICARCE63054.2024.00044

[7] Wu, X., Li, L.: An application of generative AI for knitted textile design in fashion. The Design Journal 27(2), 270–290 (2024). https://doi.org/10.1080/14606925.2024.2303236

[8] Hu, X., Yang, C., Fang, F., Huang, J., Li, P., Sheng, B.: MSEmbGAN: Multi-stitch embroidery synthesis via region-aware texture generation. IEEE Transactions on Visualization and Computer Graphics 31(9), 5334–5347 (2025). https://doi.org/10.1109/TVCG.2024.3447351

[9] Karagoz, H.F., Baykal, G., Eksi, I.A., Unal, G.: Textile pattern generation using diffusion models. arXiv preprint arXiv:2304.00520 (2023). https://doi.org/10.48550/arXiv.2304.00520

[10] Wang, Z., Xia, Y., Nie, K., Guo, M.: Alive Yi: Interactive preservation of Yi minority embroidery patterns through digital innovation. In SIGGRAPH Asia 2024 Posters, pp. 1–2 (2024). https://doi.org/10.1145/3681756.3697919

[11] Zhou, X., Figueiredo, P., Haˇsan, M., Deschaintre, V., Guerrero, P., Hu, Y., Kalantari, N.K.: RealMat: Realistic materials with diffusion and reinforcement learning. arXiv preprint arXiv:2509.01134 (2025). https://doi.org/10.48550/arXiv.2509.01134

[12] Hu, E.J., Shen, Y., Wallis, P., Allen-Zhu, Z., Li, Y., Wang, S., Wang, L., Chen, W.: LoRA: Lowrank adaptation of large language models. arXiv preprint arXiv:2106.09685 (2021). https://doi.org/10.48550/arXiv.2106.09685

[13] Tao, Z., Takida, Y., Murata, N., Zhao, Q., Mitsufuji, Y.: Transformed low-rank adaptation via tensor decomposition and its applications to text-to-image models. arXiv preprint arXiv:2501.08727 (2025). https://doi.org/10.48550/arXiv.2501.08727

[14] Chen, D., Duan, Z., Li, Z., Chen, C., Chen, D., Li, Y., Chen, Y.: AttriCtrl: Fine-grained control of aesthetic attribute intensity in diffusion models. arXiv preprint arXiv:2508.02151 (2025). https://doi.org/10.48550/arXiv.2508.02151

[15] Yang, J., Feng, J., Huang, H.: EmoGen: Emotional image content generation with text-to-image diffusion models. arXiv preprint arXiv:2401.04608 (2024). https://doi.org/10.48550/arXiv.2401.04608

[16] Nimi, H., Lu, M., Chacon, J.C.: Embodied co-creation with real-time generative AI: An Ukiyo-e interactive art installation. Digital 5(4), 61 (2025). https://doi.org/10.3390/digital5040061

Downloads

Published

Issue

Section

License

Copyright (c) 2026 Authors

This work is licensed under a Creative Commons Attribution 4.0 International License.