Imitation Learning for Fashion Style Based on Hierarchical Multimodal Representation

DOI:

https://doi.org/10.64509/jdi.11.62Keywords:

Fashion Recommendation, Multimodal Representation Learning, Style Modeling, Information RetrievalAbstract

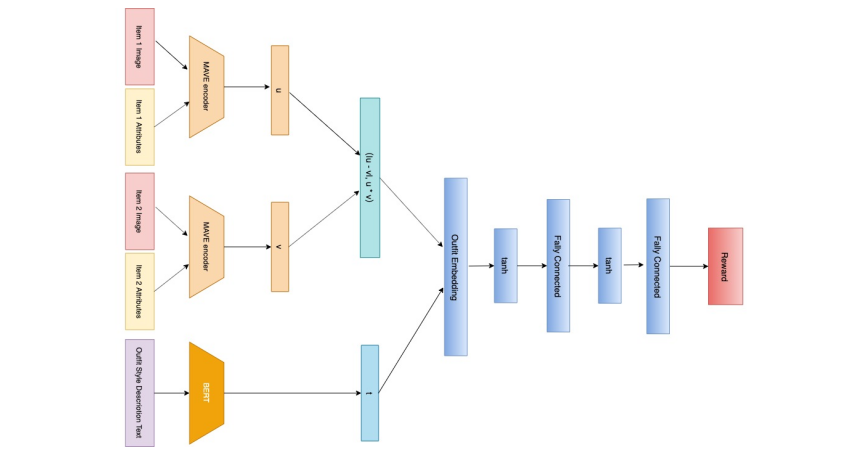

Fashion is a complex social phenomenon. People follow fashion styles from demonstrations by experts or fashion icons. However, for machine agent, learning to imitate fashion experts from demonstrations can be challenging, especially for complex styles in environments with high-dimensional, multimodal observations. Most existing research regarding fashion outfit composition utilizes supervised learning methods to mimic the behaviors of style icons. These methods suffer from distribution shift: because the agent greedily imitates some given outfit demonstrations, it can drift away from one style to another styles given subtle differences. In this work, we propose an adversarial inverse reinforcement learning formulation to recover reward functions based on hierarchical multimodal representation (HM-AIRL) during the imitation process. The hierarchical joint representation can more comprehensively model the expert composited outfit demonstrations to recover the reward function. We demonstrate that the proposed HM-AIRL model is able to recover reward functions that are robust to changes in multimodal observations, enabling us to learn policies under significant variation between different styles.

Downloads

References

[1] Bogardus, E.S.: Fashion Imitation in Fundamentals of Social Psychology, 1st edn., pp. 151-167. Century, New York, USA (1924)

[2] Sorger, R., Udale, J.: The fundamentals of fashion design. Bloomsbury Publishing, New York, USA (2006)

[3] Conneau, A., Kiela, D., Schwenk, H., Barrault, L., Bordes, A.: Supervised Learning of Universal Sentence Representations from Natural Language Inference Data. In Conference on Empirical Methods on Natural Language Processing (EMNLP), pp. 670-680 (2017). https://doi.org/10.18653/v1/D17-1070

[4] Devlin, J., Chang, M.-W., Lee, K., Toutanova, K.: BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding. In Annual Conference of the North American Chapter of the Association for Computational Linguistics (NAACL-HLT), pp. 4171-4186 (2019). https://doi.org/10.18653/v1/N19-1423

[5] Fu, J., Luo, K., Levine, S.: Learning Robust Rewards with Adversarial Inverse Reinforcement Learning. In International Conference on Learning Representations (ICLR), pp. 1-15 (2018)

[6] Liu, Z., Luo, P., Qiu, S., Wang, X., Tang, X.: DeepFashion: Powering Robust Clothes Recognition and Retrieval With Rich Annotations. In The IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 1096-1104 (2016). https://doi.org/10.1109/CVPR.2016.124

[7] Dong, Q., Gong, S., Zhu, X.: Multi-task Curriculum Transfer Deep Learning of Clothing Attributes. 2017 IEEE Winter Conference on Applications of Computer Vision (WACV) (2017). https://doi.org/10.1109/wacv.2017.64

[8] Inoue, N., Simo-Serra, E., Yamasaki, T., Ishikawa, H.: Multi-label Fashion Image Classification with Minimal Human Supervision. In 2017 IEEE International Conference on Computer Vision Workshops (ICCVW), pp. 2261-2267 (2017). https://doi.org/10.1109/ICCVW.2017.265

[9] Berg, T.L., Berg, A.C., Shih, J.: Automatic Attribute Discovery and Characterization from Noisy Web Data. In 11th European Conference on Computer Vision-ECCV 2010, pp. 663-676 (2010). https://doi.org/10.1007/978-3-642-15549-9_48

[10] Vittayakorn, S., Umeda, T., Murasaki, K., Sudo, K., Okatani, T., Yamaguchi, K.: Automatic Attribute Discovery with Neural Activations. In Computer Vision - ECCV 2016 - 14th European Conference, pp. 252-268 (2016). https://doi.org/10.1007/978-3-319-46493-0_16

[11] Chen, Q., Huang, J., Feris, R., Brown, L.M., Dong, J., Yan, S.: Deep Domain Adaptation for Describing People Based on Fine-Grained Clothing Attributes. In Proceedings of IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 5315-5324 (2015). https://doi.org/10.1109/CVPR.2015.7299169

[12] Zhao, B., Feng, J., Wu, X., Yan, S.: Memory-Augmented Attribute Manipulation Networks for Interactive Fashion Search. In Proceedings of IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 6156-6164 (2017). https://doi.org/10.1109/CVPR.2017.652

[13] Yamaguchi, K., Okatani, T., Sudo, K., Murasaki, K., Taniguchi, Y.: Mix and Match: Joint Model for Clothing and Attribute Recognition. In Proceedings of the British Machine Vision Conference (BMVC), pp. 51-15112 (2015). https://doi.org/10.5244/C.29.51

[14] Hsiao, W.-L., Grauman, K.: Learning the Latent "Look": Unsupervised Discovery of a Style-Coherent Embedding from Fashion Images. In IEEE International Conference on Computer Vision, (ICCV), pp. 4203-4212 (2017). https://doi.org/10.1109/ICCV.2017.451

[15] Bromley, J., Guyon, I., LeCun, Y., Sackinger, E., Shah, R.: Signature Verification Using a "Siamese" Time Delay Neural Network. In Proceedings of the 6th International Conference on Neural Information Processing Systems, pp. 737-744 (1993)

[16] Schultz, M., Joachims, T.: Learning a Distance Metric from Relative Comparisons. In Proceedings of the 16th International Conference on Neural Information Processing Systems, pp. 41-48 (2003)

[17] Han, X., Wu, Z., Jiang, Y.-G., Davis, L.S.: Learning Fashion Compatibility with Bidirectional LSTMs. In Proceedings of the 25th ACM International Conference on Multimedia, pp. 1078-1086 (2017). https://doi.org/10.1145/3123266.3123394

[18] Simo-Serra, E., Ishikawa, H.: Fashion style in 128 floats: Joint ranking and classification using weak data for feature extraction. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 298-307 (2016). https://doi.org/10.1109/CVPR.2016.39

[19] Kingma, D.P., Welling, M.: Auto-encoding variational bayes. arXiv preprint arXiv:1312.6114 (2013). https://doi.org/10.48550/arXiv.1312.6114

[20] Schmidhuber, J.: Learning Factorial Codes by Predictability Minimization. Neural Computation 4(6), 863-879 (1992). https://doi.org/10.1162/neco.1992.4.6.863

[21] Al-Halah, Z., Stiefelhagen, R., Grauman, K.: Fashion Forward: Forecasting Visual Style in Fashion. In IEEE International Conference on Computer Vision, ICCV 2017, pp. 388-397 (2017). https://doi.org/10.1109/ICCV.2017.50

[22] Shih, Y.-S., Chang, K.-Y., Lin, H.-T., Sun, M.: Compatibility Family Learning for Item Recommendation and Generation. arXiv preprint arXiv:1712.01262 (2017). https://doi.org/10.48550/arXiv.1712.01262

[23] Iwata, T., Watanabe, S., Sawada, H.: Fashion coordinates recommender system using photographs from fashion magazines. In International Joint Conferences on Artificial Intelligence (IJCAI), pp. 2262-2267 (2011)

[24] Veit, A., Kovacs, B., Bell, S., McAuley, J., Bala, K., Belongie, S.: Learning Visual Clothing Style with Heterogeneous Dyadic Co-Occurrences. In 2015 IEEE International Conference on Computer Vision (ICCV), pp. 4642-4650 (2015). https://doi.org/10.1109/ICCV.2015.527

[25] Simo-Serra, E., Fidler, S., Moreno-Noguer, F., Urtasun, R.: Neuroaesthetics in fashion: Modeling the perception of fashionability. In 2015 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 869-877 (2015). https://doi.org/10.1109/CVPR.2015.7298688

[26] Li, Y., Cao, L., Zhu, J., Luo, J.: Mining Fashion Outfit Composition Using an End-to-End Deep Learning Approach on Set Data. IEEE Transactions on Multimedia 19(8), 1946-1955 (2017). https://doi.org/10.1109/TMM.2017.2690144

[27] Song, X., Feng, F., Liu, J., Li, Z., Nie, L., Ma, J.: NeuroStylist: Neural Compatibility Modeling for Clothing Matching. In Proceedings of the 25th ACM International Conference on Multimedia, pp. 753-761 (2017). https://doi.org/10.1145/3123266.3123314

[28] Hsiao, W.-L., Grauman, K.: Creating Capsule Wardrobes from Fashion Images. In 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 7161-7170 (2018). https://doi.org/10.1109/CVPR.2018.00748

[29] Chen, W., Zhao, B., Huang, P., Xu, J., Guo, X., Guo, C., Sun, F., Li, C., Pfadler, A., Zhao, H.: POG: Personalized Outfit Generation for Fashion Recommendation at Alibaba iFashion. In Proceedings of the 25th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining - KDD'19, pp. 2662-2670 (2019). https://doi.org/10.1145/3292500.3330652

[30] Billard, A.G., Calinon, S., Dillmann, R.: Learning from Humans in Springer Handbook of Robotics, pp. 1995-2014. Springer, Cham, Switzerland (2016). https://doi.org/10.1007/978-3-319-32552-1-74

[31] Ziebart, B.D., Maas, A., Bagnell, J.A., Dey, A.K.: Maximum Entropy Inverse Reinforcement Learning. In Proceedings of the Twenty-Third AAAI Conference on Artificial Intelligence, pp. 1433-1438 (2008)

[32] Finn, C., Levine, S., Abbeel, P.: Guided cost learning: deep inverse optimal control via policy optimization. In Proceedings of the 33rd International Conference on International Conference on Machine Learning, pp. 49-58 (2016)

[33] Argall, B.D., Chernova, S., Veloso, M., Browning, B.: A survey of robot learning from demonstration. Robotics and Autonomous Systems 57(5), 469-483 (2009). https://doi.org/10.1016/j.robot.2008.10.024

[34] Abbeel, P., Ng, A.Y.: Apprenticeship Learning via Inverse Reinforcement Learning. In Proceedings of the Twenty-first International Conference on Machine Learning, p. 1 (2004). https://doi.org/10.1145/1015330.1015430

[35] Shi, Z., Yang, S.: Integrating Domain Knowledge into Large Language Models for Enhanced Fashion Recommendations (2025). https://doi.org/10.48550/arXiv.2502.15696

[36] Ziebart, B.: Modeling purposeful adaptive behavior with the principle of maximum causal entropy. PhD thesis, Carnegie Mellon University (2010)

[37] Finn, C., Christiano, P., Abbeel, P., Levine, S.: A Connection between Generative Adversarial Networks, Inverse Reinforcement Learning, and Energy-Based Models. ArXiv preprint arXiv:1611.03852 (2016). https://doi.org/10.48550/arXiv.1611.03852

[38] Hinton, G.E.: Training Products of Experts by Minimizing Contrastive Divergence. Neural Computation 14(8), 1771-1800 (2002). https://doi.org/10.1162/089976602761028018

[39] Wu, M., Goodman, N.: Multimodal Generative Models for Scalable Weakly Supervised Learning. In 32nd Conference on Neural Information Processing Systems (NeurIPS 2018), pp. 1-11 (2018)

[40] Cao, Y., Fleet, D.J.: Generalized product of experts for automatic and principled fusion of gaussian process predictions. arXiv preprint arXiv:1410.7827 (2018). https://doi.org/10.48550/arXiv.1410.7827

[41] Ho, J., Ermon, S.: Generative Adversarial Imitation Learning. In Proceedings of the 30th International Conference on Neural Information Processing Systems, pp. 4572-4580 (2016)

[42] Song, X., Feng, F., Liu, J., Li, Z., Nie, L., Ma, J.: NeuroStylist: Neural Compatibility Modeling for Clothing Matching. In Proceedings of the 25th ACM International Conference on Multimedia, pp. 753-761 (2017). https://doi.org/10.1145/3123266.3123314

[43] Yang, X., He, X., Wang, X., Ma, Y., Feng, F., Wang, M., Chua, T.-S.: Interpretable Fashion Matching with Rich Attributes. In Proceedings of the 42Nd International ACM SIGIR Conference on Research and Development in Information Retrieval, pp. 775-784 (2019). https://doi.org/10.1145/3331184.3331242

[44] Maaten, L., Hinton, G.: Visualizing Data using t-SNE. Journal of Machine Learning Research 9(86), 2579-2605 (2008)

[45] Koren, Y.: Factorization meets the neighborhood: a multifaceted collaborative filtering model. In Proceedings of the ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, pp. 426-434 (2008). https://doi.org/10.1145/1401890.1401944

[46] Radford, A., Metz, L., Chintala, S.: Unsupervised Representation Learning with Deep Convolutional Generative Adversarial Networks. In The International Conference on Learning Representations (ICLR) (2016)

[47] Paszke, A., Gross, S., Chintala, S., Chanan, G., Yang, E., DeVito, Z., Lin, Z., Desmaison, A., Antiga, L., Lerer, A.: Automatic Differentiation in PyTorch. In 31st Conference on Neural Information Processing Systems (NIPS 2017), pp. 1-4 (2017)

[48] Adar, E., Dontcheva, M., Laput, G.: CommandSpace: modeling the relationships between tasks, descriptions and features. In Proceedings of the 27th Annual ACM Symposium on User Interface Software and Technology, pp. 167-176 (2014). https://doi.org/10.1145/2642918.2647395

[49] Michailidou, E., Harper, S., Bechhofer, S.: Visual complexity and aesthetic perception of web pages. In Proceedings of the 26th Annual ACM International Conference on Design of Communication, pp. 215-224 (2008). https://doi.org/10.1145/1456536.1456581

Downloads

Published

Issue

Section

License

Copyright (c) 2026 Authors

This work is licensed under a Creative Commons Attribution 4.0 International License.